AMD Ryzen™ AI Embedded P100 Series

Computer-on-Modules for scalable Edge AI computing

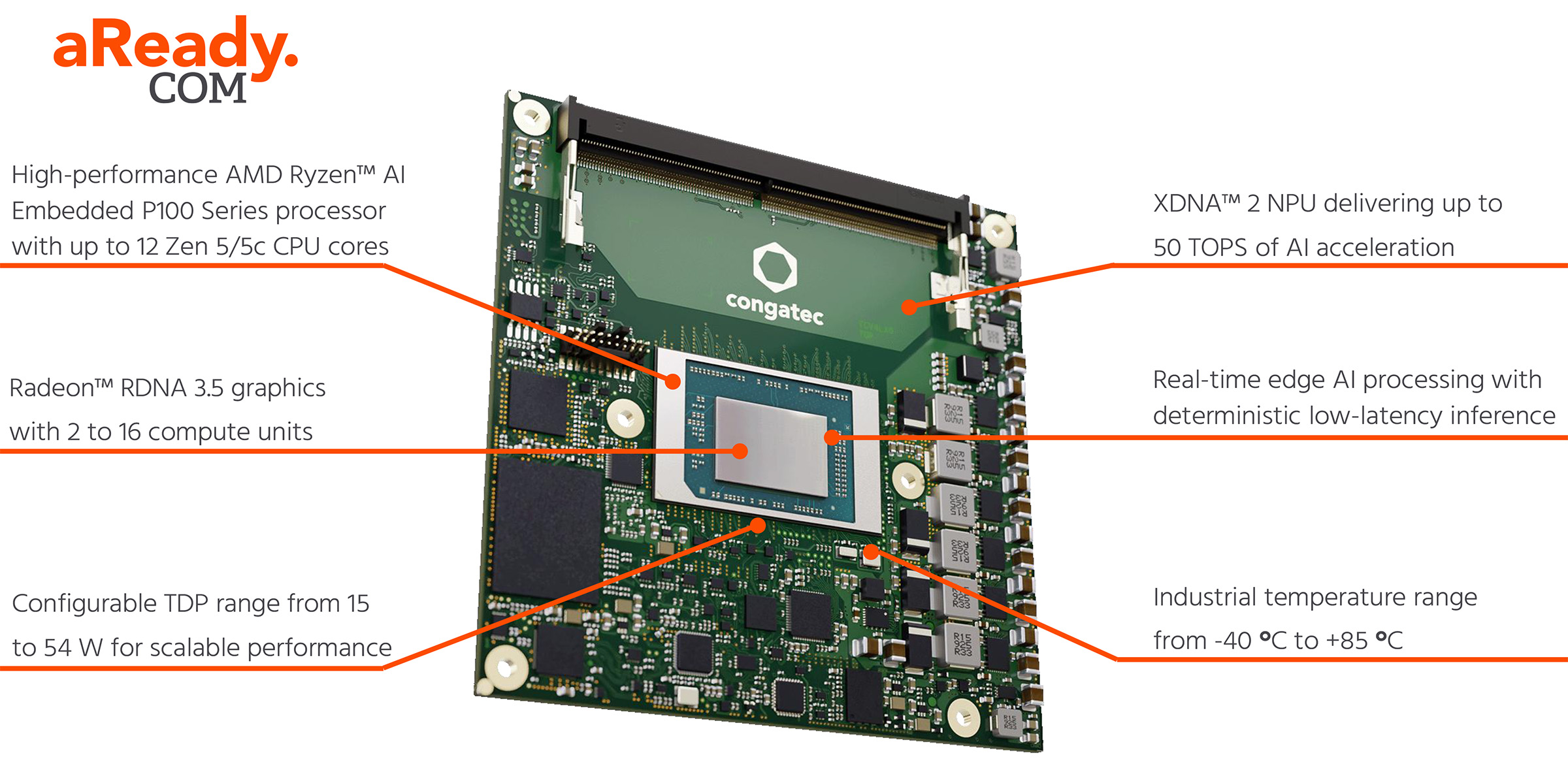

COM Express Modules with AMD Ryzen™ AI Embedded P100 Series Processors

High-scalable processor series up to 12 CPU cores, based on AMD’s innovative Zen 5/5c architecture with industrial temperature range options

AMD Ryzen™ AI Embedded P100 Series processors deliver powerful, highly scalable performance for Edge AI workloads, combining from 4 up to 12 Zen 5/5c CPU cores, RDNA™ 3.5 graphics, and an integrated XDNA™ 2 NPU with up to 50 TOPS of AI acceleration in an energy efficient System-on-Chip (SoC).

Scalable Edge AI Performance

Delivering up to 50 TOPS of AI performance, AMD Ryzen™ AI Embedded P100 processors are ideally suited for AI-enabled edge applications across industrial automation, medical technology, machine vision, smart city infrastructure, and professional gaming. By combining CPU, GPU, and NPU in a single energy-efficient System-on-Chip (SoC), the processors enable real-time AI processing directly at the edge without the need for cloud connectivity. This brings down latency while improving information security as data is processed right at the edge.

Built on advanced Zen 5/5c architecture with up to 12 CPU cores, the AMD Ryzen™ AI Embedded P100 processors set a new benchmark for scalable x86 performance at the edge. AMD combines high-performance cores for demanding workloads with low-power, efficient cores that reduce power consumption for less demanding or background activities. What's unique is that both core types are built on the same microarchitecture.

This means all cores offer the full Zen 5 feature set. The main difference is the maximum core frequency, bringing several advantages for embedded applications. The consistent execution environment across all cores handles instructions identically, simplifying programming of real-time applications where timing is critical. Task scheduling does not need complex handshaking between different core types, as the OS sees a homogenous pool of computing resources for CPU-centered tasks. Moreover, this approach is one reason for the excellent power scalability of the AMD Ryzen embedded P100 series.

AMD’s integrated XDNA™ 2 NPU provides up to 50 TOPS of AI performance, enabling low-latency AI inference. It allows demanding AI workloads, such as computer vision, anomaly detection, optical character recognition (OCR), and real-time data analytics to run locally on edge devices. Unlike cloud-connected devices, this allows for high determinism through local processing.

From cost-optimized to high-performance embedded edge designs

The configurable TDP range from 15 to 54 W allows scalable designs from passively cooled systems to high-performance platforms, eliminating the need for additional AI accelerator cards while simplifying overall system design.

By integrating CPU, GPU, and NPU capability on a single SoC with a configurable TDP from 15 to 54 W, developers can implement various designs from passively cooled, SWaP-C (size, weight, power and cost) optimized systems to high-performance edge platforms with one single platform maintaining a streamlined, cost-efficient design with reduced design efforts and bill of material.

Facts, Features & Benefits

| Facts | Features | Benefits |

|---|---|---|

| Heterogeneous computing architecture | Up to 12 Zen 5/5c CPU cores, Radeon™ RDNA 3.5 graphics and an integrated XDNA™ 2 NPU with up to 50 TOPS in a single SoC. | Handles demanding edge AI workloads without discrete accelerators, optimizing performance with reduced size, weight, power demands, and system cost (SWaP-C). |

| Advanced AMD Zen 5/5c architecture | Performance optimized Zen 5 cores and low-power Zen 5c dense cores use the exact same microarchitecture. | A consistent execution environment simplifies programming for real-time applications because designers can use the same instruction sets. Additionally, task scheduling does not require complex handshaking between different core types. |

| RDNA™ 3.5 GPU | Powerful display and graphics capabilities for up to 4 independent displays | Enables immersive HMI concepts, digital signage, and multi-display industrial applications with high I/O bandwidth. Additionally, high frame rates enable powerful entertainment applications such as digital signage and gaming, which require scalable, cost-optimized embedded platforms. |

| XDNA™ 2 NPU | Up to 50 TOPS AI processing performance | The integrated XDNA™ 2 NPU provides up to 50 TOPS of AI performance, enabling low-latency AI inference. It allows demanding AI workloads, such as computer vision, anomaly detection, optical character recognition (OCR), and real-time data analytics to run locally on edge devices. Unlike cloud-connected devices, this brings high determinism through local processing. |

| Rich I/O set | Up to eight x1 PCIe Gen 4 lanes | The high number of single PCIe lanes is ideal for I/O-rich applications that require the implementation of numerous peripherals. For instance, in test and measurement, designers don't need to integrate a discrete PCIe switch on the carrier board to connect various interface cards for sensor fusion and digital twins, simplifying the overall system design. |

| Industrial-grade robustness | Extended temperature support from -40 to +85 °C and long-term availability | Assists in hardening system designs for extreme outdoor or industrial environments, increasing thermal resistance. Improves mean time between failure (MTBF), reducing maintenance costs and increasing the overall system lifespan, while minimizing total cost of ownership (TCO). When combined with extended availability, it provides high investment security by avoiding frequent and costly redesigns, especially for certified applications in the medical and aerospace industries. |

| Programmable TDP configuration | Highly scalable power envelope from 15 W to 54 W | Allows for performance scaling from fanless, sealed systems to high-performance, active-cooled platforms. Simplifies designing complete product families or different applications on one embedded computing platform, minimizing non-recurring engineering (NRE) efforts and total cost of ownership (TCO) to improve return on investment. |

Typical application areas

Mobile Medical Imaging Devices

On-device AI accelerates ultrasound and other mobile imaging systems by combining GPU-powered visualization with NPU-based image enhancement and analysis, enabling faster point-of-care diagnostics.

Industrial Machine Vision

Integrated CPU and NPU performance enables real-time quality inspection, anomaly detection, and optical character recognition, improving production efficiency and reducing waste.

Traffic Surveillance & Smart City

AI-based image recognition supports intelligent traffic monitoring, incident detection, and data analytics while extended temperature support ensures reliable operation in harsh wayside environments.

Professional Gaming Systems

High-performance integrated graphics deliver immersive visual experiences for modern gaming and entertainment systems such as advanced slot machines.

AI-enabled Point-of-Sale Systems

Integrated AI and graphics capabilities enhance retail applications with intelligent customer analytics, automated checkout functions, and dynamic digital signage.

Robotics & Autonomous Systems

Deterministic, low-latency edge-AI processing enables real-time perception, localization, and decision-making for robotics and autonomous platforms without relying on cloud connectivity.